I recently published a letter in the International Journal of Epidemiology entitled “The case of acoustic neuroma: Comment on: Mobile phone use and risk of brain neoplasms and other cancers” in reply to a paper by Benson at al. who used the Million Women study to look at cancer risk from mobile phone use. The letter addressed the fact the authors instead of just reporting their findings (both negative and positive) in the abstract (which, lets face it is what most people read), they only reported the non-significant effects. The only statistically significant increased risk they found was for acoustic neuroma, which does fit in nicely with the conclusion of the IARC monograph working group. However, they only reported this after the effect disappeared after pooling the data with the Danish prospective cohort. As I discussed in my letter, a more transparent, and generally more accepted method would have been to conduct a meta-analysis of all available studies. This meta-analysis (although with a typo) and my letter can be found here (link).

I recently published a letter in the International Journal of Epidemiology entitled “The case of acoustic neuroma: Comment on: Mobile phone use and risk of brain neoplasms and other cancers” in reply to a paper by Benson at al. who used the Million Women study to look at cancer risk from mobile phone use. The letter addressed the fact the authors instead of just reporting their findings (both negative and positive) in the abstract (which, lets face it is what most people read), they only reported the non-significant effects. The only statistically significant increased risk they found was for acoustic neuroma, which does fit in nicely with the conclusion of the IARC monograph working group. However, they only reported this after the effect disappeared after pooling the data with the Danish prospective cohort. As I discussed in my letter, a more transparent, and generally more accepted method would have been to conduct a meta-analysis of all available studies. This meta-analysis (although with a typo) and my letter can be found here (link).

Anyway, this got covered at Microwave News (link):

**************************************************************************************************************************************************

[Edit: unfortunately, I cannot post the full article here, so i will stick to some quotes]

“…In a short letter to the International Journal of Epidemiology (IJE), the Oxford team advises that when the analysis was repeated with data from 2009-2011, “there is no longer a significant association.” Also gone, the team writes, is the “significant trend in risk with duration of use.”

“After ten or more years of phone use, the risk of AN is now only 17% higher with a confidence interval (0.60-2.27) that indicates the small increase is not significant. In their earlier paper, the Oxford group reported that the AN tumor risk more than doubled after ten years and was statistically significant (RR = 2.46, CI = 1.07–5.64).”

“The update comes in response to a letter from Frank de Vocht of the U.K.’s University of Manchester expressing surprise that the Oxford team had not included the finding of the AN risk in its original published abstract —especially given that it “provides further support” for the IARC decision to classify RF radiation as a possible human carcinogen.”

**********************************************************************************************************************************************

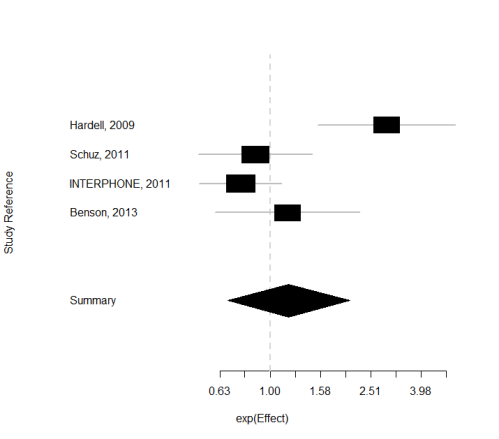

However, the last sentence asking for an updated meta-analysis of the Microwave News reporting team is easily solvable. So hereby:

—————————————————-

Effect (lower 95% upper)

Hardell, 2009 2.90 1.6 5.5

Schuz, 2011 0.87 0.52 1.46

INTERPHONE, 2011 0.76 0.52 1.11

Benson, 2013 1.17 0.60 2.27 (note that i assumed from the author’s reply that this is the updated result of their study, and NOT the pooled update!)

—————————————————-

Summary exp(effect): 1.18 95% CI ( 0.67,2.08 )

Estimated heterogeneity variance: 0.25 p= 0.004

Sense about Science

Sense about Science

dariuszleszczynski

October 8, 2013

Frank, excellent work. I will quote it extensively in my next column in The Washington Times Communities.

LikeLike

dariuszleszczynski

October 8, 2013

Reblogged this on BRHP – Between a Rock and a Hard Place and commented:

Excellent work by Frank de Voht, a noted epidemiologist.

LikeLike

FdV

October 8, 2013

Thanks, Darius.

LikeLike

Denis Henshaw

October 8, 2013

It is nice to see Frank quote the facts: the ORs first and then the 95% CIs afterwards. Some epidemiologists continue to commit “the first sin of the epidemiologist”, that is, if the OR is not significant at the 95% level, then it is deemed to be “no effect found” – that is equating the lack of an association at the 95% confidence level, with the lack of an effect. So, again, thank you Frank de Vocht.

LikeLike

FdV

October 8, 2013

Update: I had to edit this post somewhat and the complete text from the Microwave News site has been replaced by some quotes from that article.

LikeLike

Letha I. Hooper

October 22, 2013

Variability in definitions of risk factors and the potential for differential ascertainment of risk factors possibly contributed to the heterogeneity in the affected comparisons. Differing lengths of follow-up may have also resulted in heterogeneity, and studies that were deemed to have an inadequate length of follow-up (16%) may have missed events and therefore biased the results towards smaller effect sizes. Owing to the differing length of follow-up used in the included studies, meta-analysis of hazard ratios instead of odds ratios might have reduced heterogeneity. In contrast with odds ratios, hazard ratios are more likely to be constant over time. 21 Unfortunately, hazard ratios were rarely reported and thus meta-analysis of hazard ratios was not feasible. Another limitation of the data was inconsistency in outcomes—that is, for a given risk factor we would have expected to see an increase in all types of severe outcomes. Thus when evaluating risk factors with inconsistent findings across outcomes, we downgraded the level of evidence.

LikeLike